10 mins read

What Is Wake Word Detection?

In the not-so-distant future, the way we interact with our digital devices will be fundamentally transformed by a technology called wake word detection. Also known as keyword spotting, this always-on audio monitoring system listens intently for a specific trigger phrase — a "wake word" like "Hey Siri," "Alexa," or "OK Google" — to enable hands-free activation of our favorite voice assistants.

Here's how it works: Your device's microphone is constantly active, but only at a very low level. It's not recording or transmitting your audio anywhere; it's simply waiting, listening for that magic word that will bring your voice assistant to life. When the system detects the wake word, it springs into action, turning up the volume on the mic and springing your virtual assistant into conversation mode, ready to carry out your voice commands.

This hands-free, always-ready approach offers unparalleled convenience. No more hunting for your phone or tapping a button to activate Siri or Alexa — just speak the word, and your AI helper is at your service. But this convenience comes with a tradeoff: the constant microphone monitoring means your device is always listening, albeit only for that specific wake word. This has raised privacy concerns, as users worry about the implications of having an always-on audio sensor in their homes and pockets.

Fortunately, the future of wake word detection offers a solution to this privacy challenge. Innovations in on-device artificial intelligence are enabling wake word detection to happen right on your device, without any audio data ever leaving your local network or being transmitted to the cloud. This "edge computing" approach means your personal conversations and activities remain private, while you still enjoy the hands-free convenience of voice control. It's a win-win that is poised to transform the way we interact with our digital world in the years to come.

How Keyword Spotting Works Under the Hood

The core technology powering the wake word detection systems of 2026 is keyword spotting — a specialized form of automatic speech recognition (ASR) that focuses on identifying a specific trigger phrase or "wake word" within a continuous audio stream. Rather than transcribing full sentences, these lightweight neural networks are trained to simply detect the presence (or absence) of a predefined keyword.

The key to making keyword spotting work on always-on, embedded devices is a two-stage architecture. First, a tiny "always-on" model — often a compact convolutional neural network (CNN) or recurrent neural network (RNN) — continuously monitors the audio input. This initial model is optimized for extremely low power consumption and fast, accurate detection of the target wake word, even when running non-stop.

When the wake word is detected, this triggers the activation of a larger, more capable speech recognition model that can then handle follow-up voice commands or queries. This two-stage approach allows devices to remain constantly vigilant for the wake phrase, without needing to run power-hungry full-fledged speech recognition all the time.

The models underlying keyword spotting systems are trained on vast datasets of audio recordings, focusing on isolating the acoustic patterns and "phoneme" sequences that correspond to the target wake word. By learning these distinct audio signatures, the neural networks can accurately identify the trigger phrase, even amid background noise or spoken variations.

Of course, developing an effective keyword spotter involves balancing a number of competing priorities. Designers must minimize false positives (detecting the wake word when it wasn't said) while also reducing false negatives (missing a genuine wake word). This tradeoff is further complicated by the constraints of running the model on low-power, embedded hardware.

But with the rapid progress of on-device AI and ultralow-power silicon, the technology behind wake word detection is evolving rapidly. In the years ahead, we can expect to see ever-more accurate, energy-efficient keyword spotting systems that seamlessly integrate with our smart devices and voice assistants.

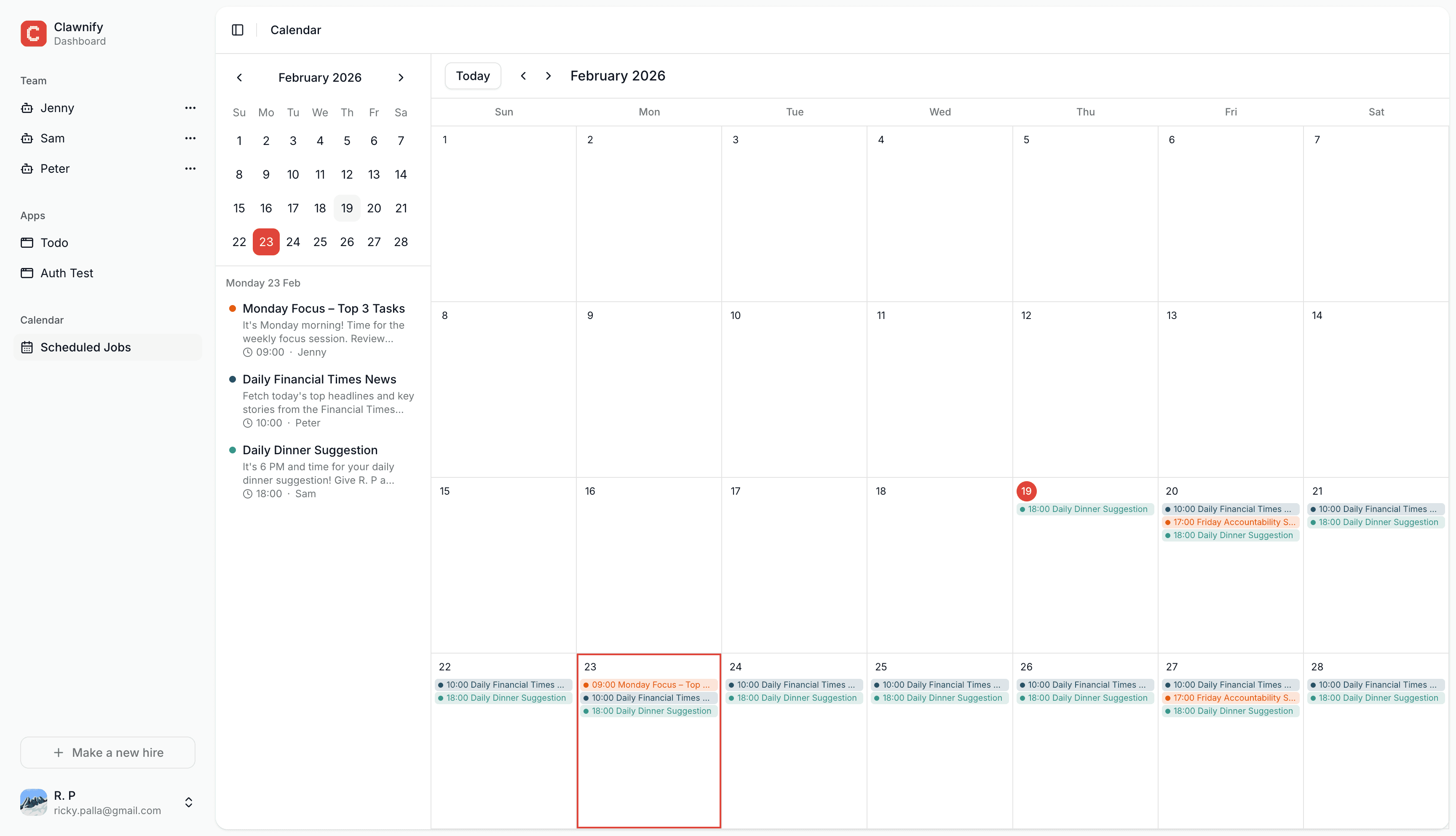

Add a wake word to your AI agent

Make your first hire with Clawnify

Beyond Smart Speakers: Where Wake Words Are Deployed in 2026

The rise of voice-enabled AI assistants like Alexa and Siri in the 2010s was just the beginning. As we head into 2026, wake word detection technology has proliferated far beyond smart speakers, transforming how we interact with an ever-growing array of digital devices and systems.

In the automotive sector, voice control powered by wake word detection has become standard, allowing drivers to safely issue commands to in-car infotainment systems, climate controls, and even to manage smart home devices remotely. Meanwhile, enterprise and industrial settings have widely adopted wake word-enabled interfaces for hands-free operation of machinery, equipment monitoring, and workplace productivity tools.

The ubiquity of wake word detection extends to the smartphones and tablets that have become essential companions in our daily lives. Android developers now seamlessly integrate wake word activation into their apps, enabling voice commands for everything from scheduling and note-taking to real-time language translation and virtual assistance.

But the revolution in wake word detection goes even deeper, with the technology becoming a fundamental building block for the surging Internet of Things. From voice-controlled smart home hubs to industrial sensors and factory automation systems, the ability to activate devices hands-free via a wake word is quickly becoming table stakes. As on-device AI processing power continues to improve, these IoT implementations are moving beyond the cloud to deliver faster response times and enhanced privacy and security.

In 2026, wake word detection is no longer a novel feature — it's core infrastructure that underpins the next generation of intelligent, voice-enabled systems. As this technology continues to evolve and expand its reach, the way we interact with the digital world is fundamentally changing, ushering in a new era of seamless, voice-driven experiences.

Privacy and the Case for On-Device Processing

In the era of ubiquitous voice interfaces, the privacy implications of always-on wake word detection cannot be overstated. The traditional approach of relying on cloud-based keyword spotting models exposes users to a range of risks, from the storage and processing of potentially sensitive audio recordings to the threat of accidental triggers activating voice assistants without user consent.

Under strict data privacy regulations like the EU's GDPR and California's CCPA, the legal and regulatory risks of cloud-based wake word detection are substantial. Organizations must implement robust data protection measures, obtain explicit user consent, and be prepared to respond to data subject access requests. Failure to comply can result in hefty fines and severe reputational damage.

Fortunately, the rapid progress of on-device AI is transforming the landscape of wake word detection. Modern, lightweight machine learning models can now deliver production-grade accuracy entirely on the user's device, without the need to send any audio data to the cloud. This means that voice recordings never leave the hardware, ensuring that user privacy is protected at the source.

On-device processing also provides additional benefits beyond privacy. By eliminating the latency and connectivity requirements of cloud-based systems, wake word detection can now be performed with instantaneous response times and zero network dependency. This is a game-changer for mission-critical applications and environments with unreliable internet access.

As the world embraces the convenience of voice interfaces, it is clear that the future of wake word detection lies in on-device AI. By prioritizing privacy and security at the hardware level, technology companies can build trust with consumers and pave the way for the next generation of voice-enabled experiences.

How OpenClaw Implements Wake Word Detection Across All Your Devices

At the heart of OpenClaw's wake word detection capabilities is a powerful and flexible approach that seamlessly integrates across all your connected devices. Unlike traditional voice assistants that rely on centralized cloud processing, OpenClaw takes a decentralized, on-device approach that puts you in control of your personal data and ensures sub-second response times.

The key to this system is the voicewake.json file, which acts as a central repository for all your trigger words and wake commands. This file is managed by the OpenClaw Gateway and shared in real-time with every connected client device via a WebSocket broadcast. This means that any changes you make to your voice wake settings — whether adding new commands or adjusting existing ones — are instantly reflected across your entire device ecosystem.

Under the hood, the process works like this:

voicewake.get: When a device first connects to the OpenClaw ecosystem, it retrieves the latest version of the

voicewake.jsonfile, ensuring that it has the most up-to-date trigger word configurations.voicewake.set: Whenever you update your voice wake settings through the OpenClaw app or web interface, the Gateway immediately updates the

voicewake.jsonfile and broadcasts the changes to all connected devices.voicewake.changed: Each client device actively listens for changes to the

voicewake.jsonfile, and when a modification is detected, it automatically updates its own local keyword detection models, ensuring seamless and consistent wake word functionality across your entire setup.

Of course, the platform reality is a bit more complex, as different operating systems handle wake word detection in varying ways. On macOS and iOS devices, for example, the detection is performed locally on the device itself, leveraging the powerful on-device machine learning capabilities of those platforms. Android devices, on the other hand, currently rely on a more manual approach, capturing the microphone input and processing it through the OpenClaw engine.

Regardless of the underlying implementation, the end result is a wake word detection system that just works, allowing you to effortlessly trigger your voice commands across all your connected devices without compromising your privacy or data security. It's the future of voice control, and OpenClaw is leading the way.