10 mins read

The rise of local AI agents has brought a surprising hardware hero to the forefront: the Mac Mini. While traditional AI development often requires bulky, power-hungry servers equipped with expensive NVIDIA GPUs, the transition to Apple Silicon (M1, M2, M3, and now M4) has democratized high-performance inference. The secret sauce is Unified Memory Architecture (UMA). Unlike PC environments where memory is split between the CPU and the GPU, Apple Silicon allows both processors to tap into a single, high-bandwidth pool of RAM. For OpenClaw users, this means your agent can access massive 32B or even 70B-parameter models locally without hitting the VRAM bottleneck that plagues most consumer-grade PCs.

When running a local instance, memory is everything. In an M4 Pro setup with 64GB of RAM, you can allocate the majority of that memory specifically to model weights. This results in impressive performance — often reaching 11-12 tokens per second on models that would sluggishly crawl on a standard desktop. This high throughput is critical for an autonomous agent that needs to "think" through multi-step plans, search the web, and execute code in real-time. If the inference is too slow, the agent's internal reasoning loop breaks down, leading to timeouts and errors. Apple Silicon ensures that OpenClaw stays responsive enough to actually be useful.

Hardware Generation | Best For | Local Performance |

|---|---|---|

Mac Mini M4 (Base) | Cloud API-first setups | Great for 7B-8B models |

Mac Mini M4 Pro (64GB) | Heavy Local Inference | Runs 32B models smoothly |

Legacy Intel Macs | Legacy Workflows | NOT recommended for Local AI |

Beyond memory throughput, the Neural Engine in these chips is specifically optimized for the matrix multiplications that power modern LLMs. This dedicated hardware handles the heavy lifting while leaving the main CPU cores free to manage the OpenClaw gateway and your desktop integrations. Whether you're self-hosting on a device you already own or buying a dedicated "AI workstation," the Mac Mini offers the highest "intelligence-per-square-inch" ratio on the market today. For those who want this power without the local hardware management, Clawnify.com provides a managed version of this same agentic infrastructure in the cloud.

One of the most compelling use cases for OpenClaw is the "Always-On Assistant" — an agent that monitors your emails, updates your CRM, and handles customer inquiries while you're offline. To achieve this, the host machine must run 24/7. This is where the Mac Mini's extreme power efficiency becomes a game-changer. Most high-end AI PCs draw 100-300 watts even at idle, leading to significant electricity bills and heat management issues. In contrast, an Apple Silicon Mac Mini idles at a mere 3 to 6 watts. Even under heavy AI inference load, it remains whisper-quiet and draws less power than a standard lightbulb.

This efficiency allows you to position the Mac Mini as a legitimate "AI appliance" in your office or a closet. It becomes your tireless digital employee that never sleeps. But power isn't the only exclusive benefit of the Mac Mini ecosystem. There is one "killer feature" that only a Mac can provide for OpenClaw: native iMessage integration. Because the gateway runs on macOS, your agent can send and receive messages through your iCloud account. This allows you to interact with your AI agent through the same Blue Bubble interface you use for friends and family, complete with support for attachments, reactions, and thread continuity.

Eco-Friendly: Annual power cost of less than $20 for 24/7 operation.

Invisible: No fan noise or overheating, even when running heavy Llama 3 models.

Unified Comms: Control your agent via iMessage (exclusive to macOS host).

Local Files: Directly access your local documents and spreadsheets for context.

While a Linux VPS might be the standard for many web services, the unique combination of low power and proprietary Apple services makes the Mac Mini a superior choice for personal and small business automation. If you're a team that lives on Slack or WhatsApp instead, Clawnify.com offers the perfect middle ground, providing a managed OpenClaw host that bridges these enterprise channels without requiring you to keep a physical Mac running in your office.

Start using OpenClaw in an isolated computer

Hire your first (AI) employee

For high-volume users, the financial argument for an OpenClaw Mac Mini setup is undeniable. While cloud models like Claude 3.5 Sonnet and GPT-4o are incredibly capable, they are also expensive when accessed via API. For an active agent that performs hundreds of searches, reads dozens of emails, and iterates on code blocks daily, API costs can easily spiral into the hundreds of dollars per month. A base M4 Mac Mini starts at $599 — meaning the hardware can pay for itself in less than six months solely through saved API fees.

Software tools like Ollama, LM Studio, and the MLX framework have made running these local models as simple as a single CLI command. OpenClaw is designed to plug directly into these local providers using an OpenAI-compatible API endpoint. By pointing your agent to localhost:11434 (the Ollama default), you unlock unlimited reasoning, drafting, and searching with zero marginal cost. You can run hundreds of "heartbeat" checks every day — asking your agent to monitor competitors or summarize news — without worrying about token count or monthly spend.

Cost Factor | Cloud-Only Setup | Mac Mini + Local LLM |

|---|---|---|

Initial Cost | $0 | $599 (One-time) |

Usage Cost | $0.50 - $15.00 per 1M tokens | $0 (Unlimited) |

Privacy | Data sent to 3rd party | 100% Local / Private |

Latency | Network dependent | Instant (Zero network lag) |

Crucially, OpenClaw supports a hybrid approach. You can configure your agent to use a free local model (like Llama 3 or Mistral) for routine background tasks like email sorting, while only "calling up" an expensive cloud model like Claude 4.5 for complex reasoning or specialized coding. This tiered strategy maximizes both intelligence and economics. For businesses that want this cost-saving architecture without the local hardware setup, Clawnify.com allows you to bring your own API keys or leverage cost-efficient cloud providers, providing a managed path to agentic ROI.

As we navigate through 2025, many users are facing a common tech dilemma: "Buy now or wait for the M5?" The current Mac Mini with the M4 chip is an absolute powerhouse for AI. With its updated architecture and significantly faster Neural Engine compared to the M1/M2 eras, it is more than capable of handling current OpenClaw workloads. However, Apple has already confirmed that production of the next-generation Mac Mini — powered by the M5 chip — will begin later in 2026 at a brand-new manufacturing facility in Houston, Texas.

The M5 refresh is rumored to focus heavily on "Agentic Infrastructure." This likely means even larger UMA memory capacities and a Neural Engine redesign specifically tailored for the transformer-based architectures that power local LLMs. If your use case involves running massive, unquantized local models simultaneously with video processing or heavy developer builds, waiting for the M5 Pro might be worth it. However, for 90% of OpenClaw users today, the M4 Pro represents the "Goldilocks" zone: it is available now, it runs current open-weights models at lightning speed, and it is significantly more affordable than the high-end Mac Studio.

M4 Strategy: Best ROI for immediate deployment. Current base models are plenty for cloud-first or hybrid setups.

M5 Strategy: Wait if you need 128GB+ of unified memory for research-scale local models.

Manufacturing: The Houston facility signals shorter lead times and improved availability for North American businesses in 2026.

The "Always-on AI" revolution won't wait for the next chip cycle. Starting with an M4 Mac Mini today gives you the advantage of month-on-month productivity gains that will far outweigh the incremental spec bump of the M5. Setting up OpenClaw now allows you to build your AgentSkills library and refine your workflows today. If you want to start even sooner without waiting for shipping, Clawnify.com allows you to deploy an AI employee in under 5 minutes on enterprise-grade cloud hardware, giving you the power of agentic AI without the "wait for M5" anxiety.

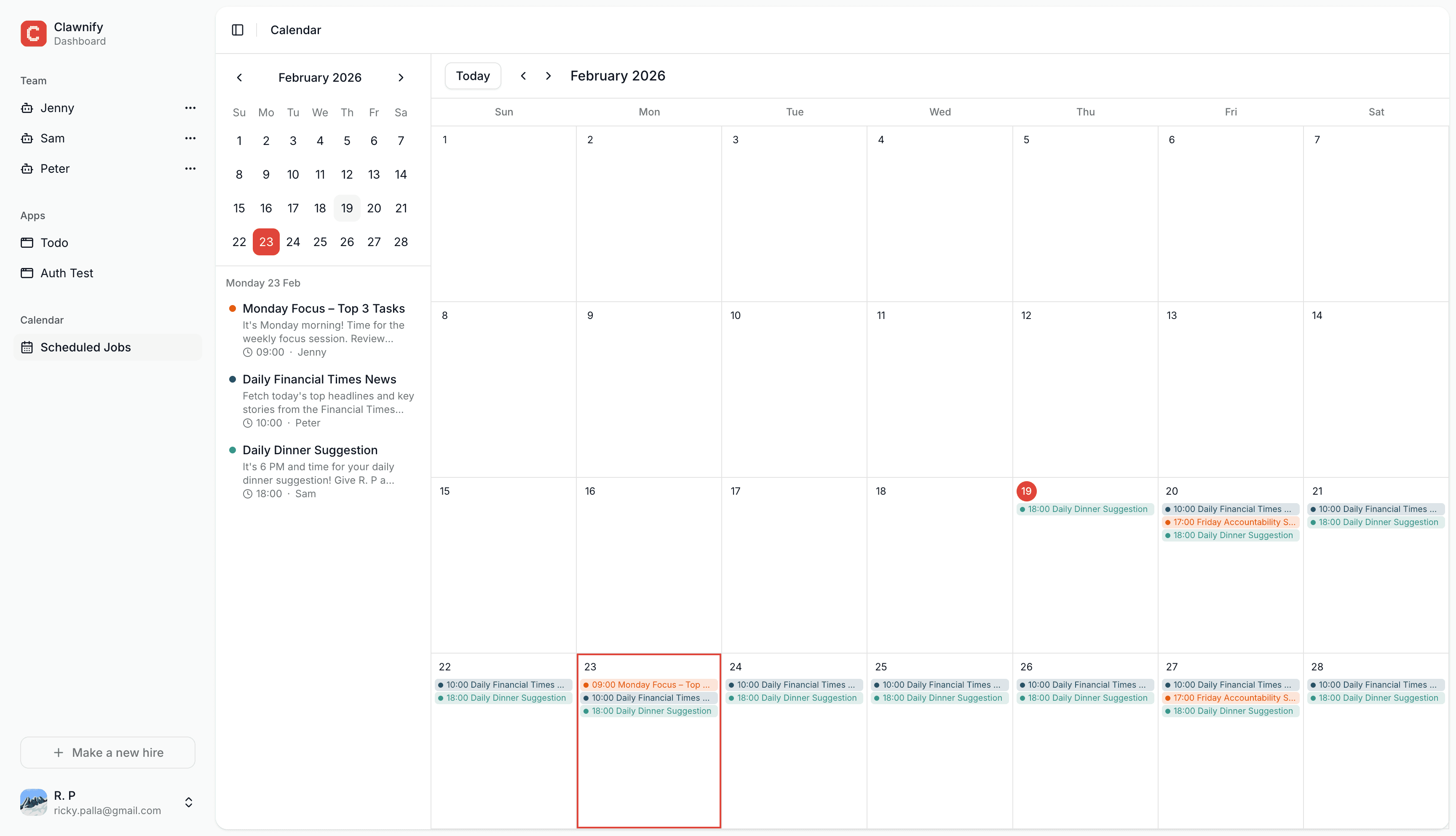

Running a single AI agent on a local Mac Mini is a brilliant "Day 1" solution. It offers privacy, low cost, and a fun hardware project for the tech-inclined business owner. However, as your reliance on AI agents grows — moving from a "cool assistant" to a "critical business process" — the limitations of a single local box become apparent. If your home internet goes down, your office loses power, or your Mac accidentally restarts to install an update, your Always-On Assistant is suddenly offline. For a hobbyist, this is an inconvenience; for a business managing active sales leads, it's a failure.

This is the natural transition point to a managed platform like Clawnify.com. Clawnify is built on the same robust OpenClaw framework but moves the "brain" of your agent into a professional cloud environment with redundant power, high-speed fiber connectivity, and managed security updates. It eliminates the "hardware anxiety" of keeping a Mac Mini running 24/7 in your office. Instead of debugging Node.js versions or managing Python environments for your local models, you get a dedicated dashboard where you can monitor your AI employees in real-time, scale their resources with a single click, and connect them to thousands of apps via one-click OAuth.

Scale Factor | Local Mac Mini | Clawnify (Managed) |

|---|---|---|

Reliability | Single point of failure (Power/ISP) | 99.9% Uptime SLA |

Scalability | Hardware limited | Scale up/down on demand |

Setup Time | 30-60 minutes | < 5 minutes |

Maintenance | Manual updates / Troubleshooting | Fully Managed / Hands-off |

The ideal journey for most small businesses is simple: Start with an OpenClaw Mac Mini to learn the ropes, experiment with local models, and automate your first few tasks. Once those agents are generating clear value, migrate the workload to Clawnify for production-grade reliability. This ensures that your AI agents remain active, secure, and reachable across WhatsApp, Slack, and Telegram, no matter what happens to your local office hardware. The future of work is hybrid — and your agentic team should be too.