10 mins read

The Shift from Co-pilot to Autopilot: Defining the 2026 AI Workforce

Two years ago, AI in the workplace meant a smarter autocomplete. You typed a prompt. It suggested something. You decided. That model is already obsolete. The 2026 AI workforce operates on a fundamentally different principle: autonomous action, not assisted decision-making.

The industry labels this shift as moving from Co-pilot to Autopilot. A Co-pilot AI waits for your input and surfaces options. An Autopilot AI receives a goal, builds a plan, executes tasks across multiple systems, and reports back with results. 75% of organizations are already deploying autonomous AI agents in some operational capacity. That number will not shrink. The question for SMBs is not whether to engage with this shift. It is how fast they can do it without breaking what already works.

The failure mode we see most often is treating the transition as a software upgrade. A business installs an AI tool, points it at customer support tickets, and expects magic. What they get instead is an expensive chatbot with a confidence problem. The tool has no defined scope, no escalation rules, and no clear ownership of outcomes. It stalls on edge cases. Staff distrust it. It gets quietly abandoned by Q2.

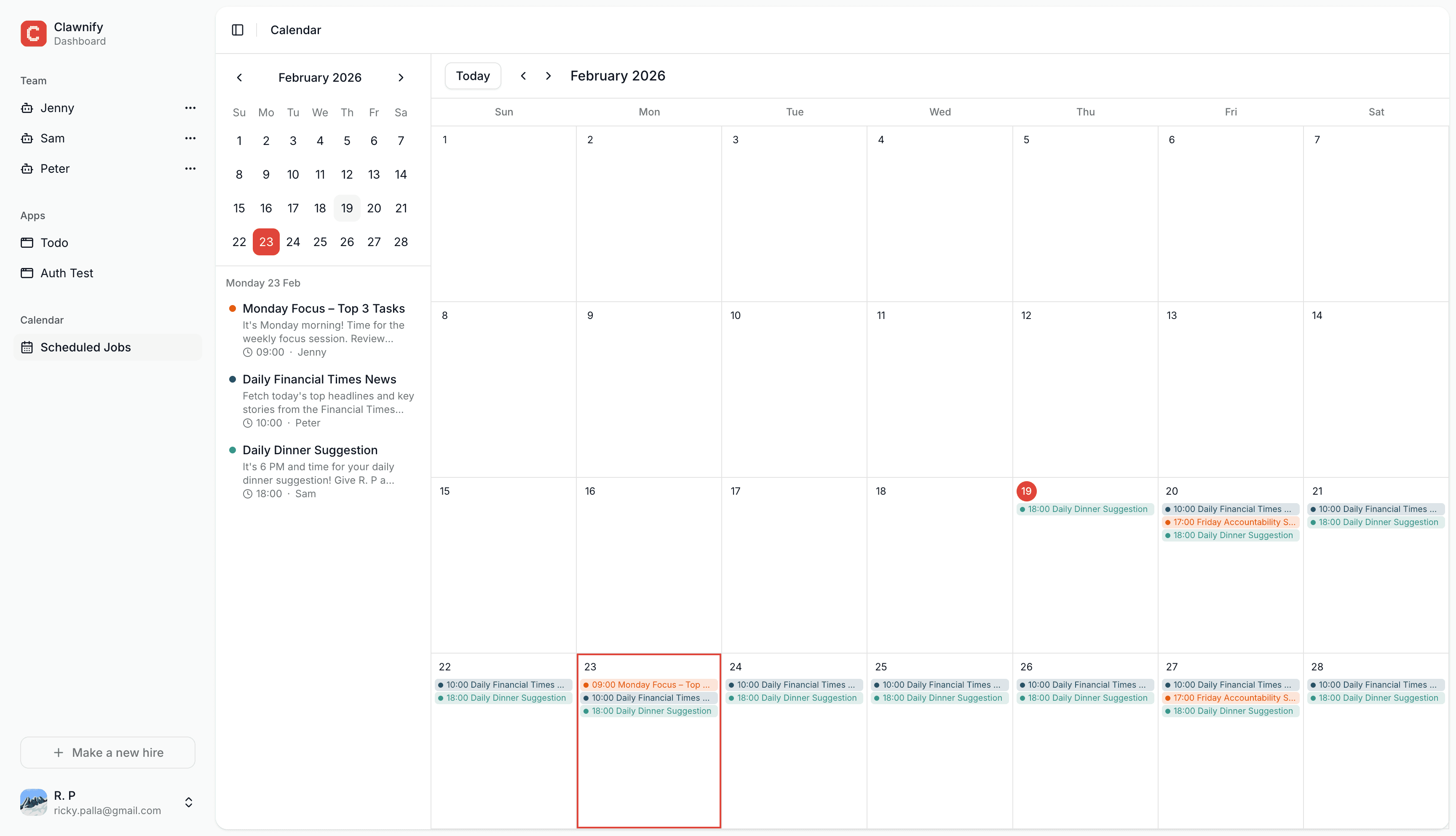

At Clawnify, we've watched this pattern repeat across dozens of SMB implementations. The businesses that succeed stop treating AI as a chat box and start treating it as a team member with a specific job description. That means writing an actual role definition: what this AI agent owns, what it escalates, what success looks like in measurable terms. The moment you frame it that way, the entire deployment changes. You stop asking "what can this tool do?" and start asking "what does this role require?"

The practical difference between Co-pilot and Autopilot breaks down into three operational shifts:

Trigger-based execution: Autopilot agents act on events, not prompts. A new lead enters the CRM and the agent qualifies, scores, and routes it without human initiation.

Multi-step reasoning: These agents don't complete one task. They complete workflows. Research, draft, send, log, follow up.

Defined accountability: The agent has a measurable output it owns. Not "help with emails." Own the first-response SLA for inbound inquiries.

For SMBs, this is the real opportunity. Large enterprises are deploying AI agents at scale but with enormous coordination overhead. A 12-person company can deploy a focused AI employee in a single role, measure it cleanly, and iterate fast. Smaller teams have less bureaucracy blocking the transition from Co-pilot to Autopilot. That is a structural advantage most SMBs are not yet using.

The 2026 AI workforce is not a feature set. It is an org chart decision.

The Anatomy of a Digital Worker: LLMs, Memory, and Tools

An AI employee is not a chatbot. The distinction matters enormously for SMBs evaluating where to invest. A real digital worker has three non-negotiable components: a large language model (LLM) core, a structured memory system, and access to functional tools. Strip any one of these out and you have an expensive autocomplete feature, not a workforce asset.

The LLM core is the reasoning engine. Models like GPT-4o, Claude 3.5, or Gemini 1.5 Pro process instructions and generate outputs. But the LLM alone is stateless. It forgets everything the moment a conversation ends. For a business, that means your AI employee wakes up every morning with no memory of your brand, your clients, or your preferred way of handling a complaint. That is not a workforce. That is a liability.

This is where system prompts and memory layers become the real differentiator. A system prompt is the standing instruction set baked into every interaction. It defines the agent's role, tone, constraints, and business context. Memory layers — short-term, session-based, and long-term — allow the agent to retain information across days, weeks, and client interactions. Memory as a first-class infrastructure requirement rather than an optional add-on. At Clawnify, we've seen that agents without long-term memory fail to provide ROI after the first week. They lose the business's unique voice. Clients notice immediately.

The third pillar is tool access. An AI agent that can only generate text is half-built. Functional tool access means the agent can call APIs, read CRM records, send emails, update spreadsheets, and trigger workflows. This is what separates a digital worker from a digital notepad. For SMBs, the practical tool stack typically includes:

CRM integration (HubSpot, Salesforce, or equivalent)

Calendar and scheduling APIs

Email and messaging platforms

Internal knowledge bases or vector databases for retrieval-augmented generation

E-commerce or ticketing systems relevant to the business vertical

Multi-agent architectures take this further. Instead of one generalist agent, you deploy specialized agents — one for customer support, one for lead qualification, one for internal reporting — coordinated by an orchestrator. Clawnify's model demonstrates how these agents hand off tasks, share memory, and escalate edge cases to humans. The result is a workforce that scales without proportional headcount costs.

For SMBs, the build-versus-buy question here is real. Most small businesses do not have the engineering capacity to wire these systems from scratch. The smarter path is selecting platforms that expose these three layers — LLM, memory, tools — through configuration rather than code. That is the architecture worth demanding from any AI workforce vendor before signing a contract.

Deploy Your First AI Employee Today

Stop managing tools and start leading a digital workforce with Clawnify’s agent hosting.

The Economic Impact: Wage Premiums and Productivity Gains

The numbers are no longer speculative. AI-skilled workers now command a 56% wage premium over their non-AI counterparts (according to LinkedIn Workforce Report, 2025). For SMBs, that stat cuts two ways. Hiring AI-literate humans gets expensive fast. But building an AI workforce — deploying autonomous agents alongside a lean human team — sidesteps that cost entirely.

The talent market is already moving. 52% of talent leaders plan to add autonomous AI agents to their workforce by 2026 (according to Salesforce State of Work Report, 2026). That isn't a prediction. It's a procurement decision already in motion at companies your competitors work with. SMBs that wait for the trend to "mature" will be competing against leaner operations that moved 18 months earlier.

The productivity case is just as direct. Industrial companies like Britannia have reported a 75% reduction in competency assessment times after integrating AI agents into their workforce evaluation processes (source: Josh Bersin Company, 2025). That kind of compression doesn't just save HR hours. It accelerates how fast a business can onboard, redeploy, and scale its people. For an SMB with no dedicated HR department, that's the difference between growing and grinding.

But here's where most coverage of AI workforce economics gets it wrong. The conversation fixates on speed. Faster hiring. Faster assessment. Faster output. Speed is real, but it's a byproduct. The actual economic lever is headcount independence. We've seen this clearly with our own clients at Clawnify — the real win is the ability to scale sales outreach without adding a single new hire. An AI employee running outbound sequences doesn't take PTO. It doesn't need onboarding. It doesn't plateau at 80 touches a week because it's managing a full pipeline.

For SMBs specifically, this changes the unit economics of growth. Consider what scaling a sales function traditionally requires:

A new SDR at $55,000–$75,000 base salary

3–6 months to full productivity

Management overhead and tooling costs

Attrition risk inside 18 months

An AI workforce layer replaces the volume work of that role — prospecting, sequencing, follow-up — at a fraction of the cost and from day one. The human SDR then handles what AI still can't: nuanced negotiation, relationship depth, and deal closing.

The economic argument for an AI workforce isn't about replacing people. It's about refusing to let headcount be the ceiling on revenue. For SMBs operating with tight margins and limited hiring budgets, that reframe is everything. The 56% wage premium for AI-skilled humans is a signal. The businesses winning in 2026 aren't paying it — they're building around it.

Building Your Multi-Agent System for Sales and Operations

Single-prompt bots are a dead end for SMBs serious about scale. They answer one question, then stop. A multi-agent AI workforce works differently. Each AI employee handles a discrete function—lead qualification, proposal drafting, inventory flagging, follow-up sequencing—and those agents communicate with each other before any output reaches a customer or stakeholder.

The market is moving fast. Gartner projects the co-pilot market will reach $23.1 billion by 2026 (source: gartner.com). That number reflects enterprise adoption, but the architecture driving it is now accessible to SMBs. The cost barrier dropped. The complexity barrier is next.

Here is what a practical multi-agent setup looks like for a small sales and operations team:

Intake Agent: Qualifies inbound leads against your ICP criteria before a human sees them.

Research Agent: Pulls company data, recent news, and competitor context for each qualified lead.

Drafting Agent: Writes the first outreach or proposal using the research output.

Review Agent: Checks tone, accuracy, and compliance before delivery.

Operations Agent: Logs outcomes, updates your CRM, and triggers follow-up sequences.

The review layer is where most SMBs underinvest. They build the drafting agent and ship it directly to customers. That is the mistake. At Clawnify, we've seen multi-agent systems outperform single-prompt bots by 40%—and the gap comes almost entirely from peer-review logic. One agent catches what another misses. The output that reaches your customer has been stress-tested internally, not externally at your expense.

The coordination layer matters as much as the individual agents. Agents need shared memory, clear handoff protocols, and explicit fallback rules. If the research agent returns incomplete data, the drafting agent needs instructions for that scenario. We've seen pipelines collapse not because the AI was wrong, but because no one defined what "wrong" should trigger. Build your failure states before you build your success states.

For SMBs, the practical entry point is a two-agent system: one agent that produces, one agent that reviews. That alone eliminates the most common failure mode—unreviewed AI output reaching a prospect. From there, you add agents as your workflow complexity grows. You are not building a chatbot. You are building an AI workforce with internal accountability.

The businesses winning with this model treat each agent like a role on an org chart. They define scope, define escalation paths, and audit outputs weekly. The technology is not the hard part. The operational discipline is.